Why Security Engineers Who Ignore Claude Code Will Be Obsolete by 2027

Claude Code Is Changing What “High Performance” Looks Like in Security Teams

As I write this .. it is May 2026 and a lot of cybersecurity engineers still think tools like Claude Code are developer toys / something you use to generate boilerplate faster, or to save a junior engineer from writing regex.

Useful, maybe, but not critical.

That mindset is going to age badly.

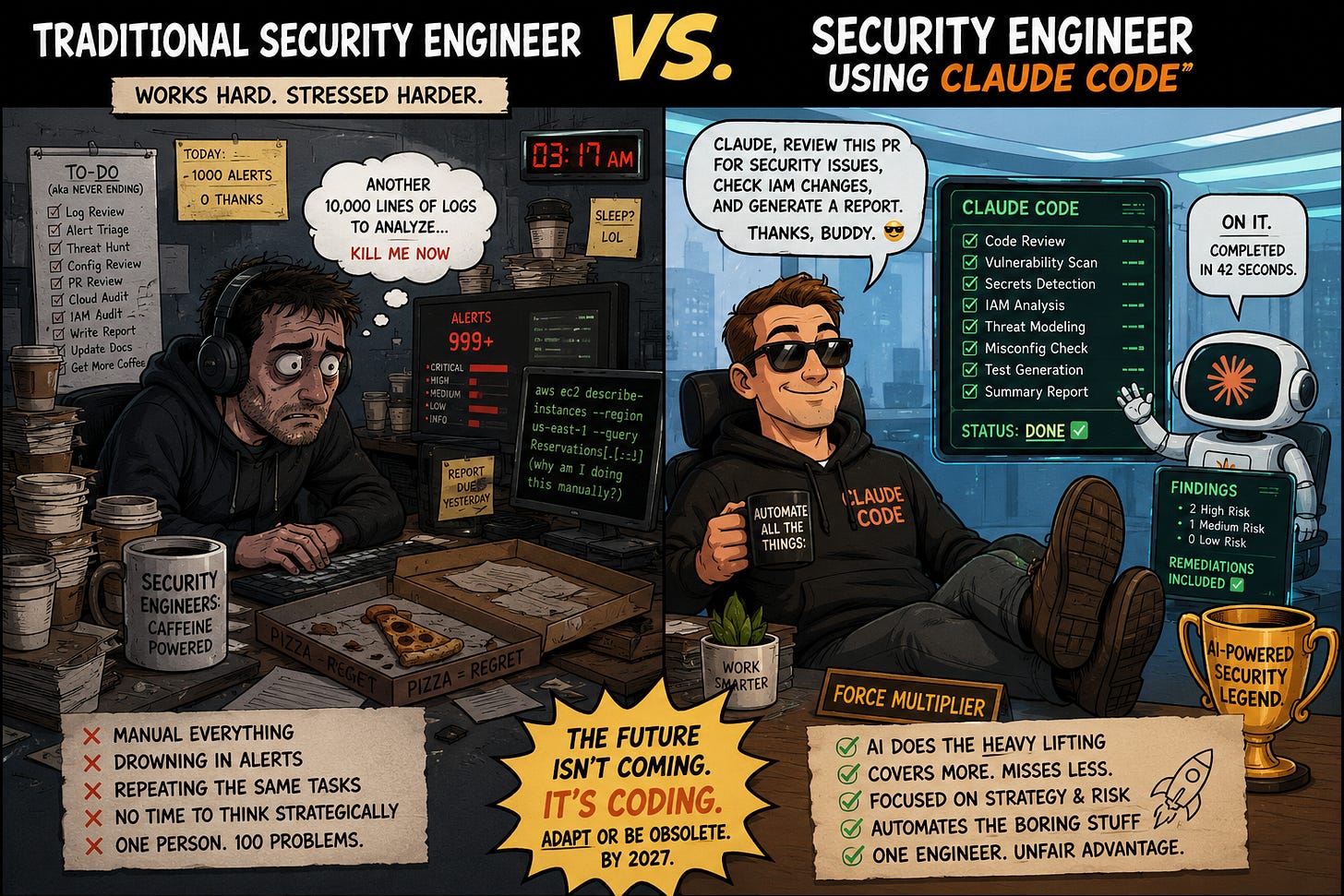

A few months ago, I spoke to a security engineer who proudly told me he avoids AI coding tools completely. His reasoning was simple: “I don’t trust AI-generated code.” At the same time, another engineer on his team had quietly started using Claude Code to review pull requests, explain infrastructure changes, generate security test cases, and summarize risks for developers.

A few weeks later, management started noticing something interesting.

One engineer was consistently reviewing more changes, responding faster, finding issues earlier, and producing cleaner documentation. Not because he was smarter. Not because he worked longer hours. But because he had effectively given himself an AI-powered junior security team.

That gap compounds very quickly.

What’s happening right now isn’t just “AI helping people code faster.” We’re in the early stages of a real shift in how software gets built — and by extension, how it gets secured. Security engineers who ignore that shift aren’t just missing a productivity upgrade. They risk becoming genuinely less competitive, not because AI replaces them, but because the engineers who figure this out early will outperform them by a wide margin. That gap is already starting to show.

Anthropic’s own 2026 report on agentic coding describes a world where AI systems handle multi-step workflows, navigate large codebases, debug issues, write tests, and coordinate across files. These aren’t chatbots anymore — they’re closer to autonomous engineering teammates.

That changes the economics of security work.

The problem cybersecurity has always had

Security engineering has one chronic, unsolved problem: there are never enough humans. Too many alerts, too many pull requests, too many cloud resources to audit, too many misconfigurations to catch manually. The work scales faster than headcount ever can.

AI changes that equation.

Think about what a well-prompted Claude Code workflow could do with a single pull request: review the architecture changes, flag trust boundary violations, map potential attack paths, check IAM permissions, review Terraform drift, suggest remediations, write security tests, and produce plain-English explanations for developers and documentation for auditors. Now imagine running that across hundreds of PRs a week.

That’s not theoretical .. Anthropic has already released Claude Code capabilities specifically focused on security review in codebases.

The shift isn’t “AI writes code.” The shift is that security engineers are becoming orchestrators of intelligent systems. And most people haven’t started preparing for that yet.

What actually gets more valuable

The old model rewarded memorization: learn the tools, learn the commands, learn the dashboards, pass the cert, repeat. A lot of that low-level implementation work is exactly what AI is getting good at — reviewing logs, writing detection rules, checking infrastructure drift, validating configurations.

What doesn’t get commoditized is the judgment layer above that. Someone still has to define security policies, establish guardrails, validate what the AI produces, understand business risk, and catch it when it’s confidently wrong. Ironically, AI is making senior-level thinking more valuable, not less. The problem is that a lot of current security work isn’t senior-level thinking — it’s repetitive execution that just happens to require a human. That’s what’s at risk.

“But AI makes mistakes”

Yes. So do humans, at much lower throughput.

The question isn’t whether Claude Code is perfect. It’s whether a security engineer using it well becomes significantly more effective than one who isn’t. The answer is clearly yes. More code reviewed, larger environments covered, patterns spotted faster, repetitive work automated. That productivity gap compounds.

The same thing happened with cloud adoption. There was a window where some infrastructure engineers dismissed cloud skills as hype. Then the market shifted, and the engineers who had ignored it found themselves maintaining legacy systems while everyone else moved into architecture and platform roles. The same pattern is forming around AI-native engineering, and it’s moving faster.

Pressure from both directions

It’s worth noting that defenders aren’t the only ones with access to these tools. Google has warned that AI-assisted hacking activity is no longer theoretical .. there’s evidence of AI being used to help identify and exploit vulnerabilities. So security teams face pressure from both sides: they need AI to scale their defenses at the same time attackers are using it to scale their offense. Manual-only workflows simply can’t keep pace with that indefinitely.

By 2027, the most effective security engineers probably won’t be the people manually writing every detection rule themselves. They’ll be the people who know how to build secure AI-assisted workflows, design agent guardrails, orchestrate multiple tools together, and validate outputs intelligently .. combining human expertise with machine-scale execution.

In some ways, security engineering is starting to look more like system design or even management. Not people management, but coordinating intelligent systems toward security outcomes. That’s a genuinely different skillset from traditional security operations, and honestly, a lot of people in the field are resisting the transition because it threatens an identity they spent years building.

That’s understandable. It doesn’t change what’s happening.

The practical advice is simple: you don’t need to become an AI researcher or a machine learning engineer. But you do need to understand how agentic systems work, how AI changes attack surfaces, and how to use tools like Claude Code as a genuine force multiplier rather than a novelty. The engineers who start that learning curve now will have a meaningful head start on the ones who wait until the market makes it unavoidable.

Want to Learn How to Do This in Practice?

If you want to learn how to apply this in practice then , I’ve created a practical course designed specifically for cybersecurity professionals and software engineers

The course walks through how to rethink the mental model for Claude code and turn into a living member of your cybersecurity team

You can it for a special discount below. Paid subscribers get it for free . Thanks for supporting this newsletter !

👉 Mastering Claude Code For Cybersecurity Professionals

Link for Paid Subscribers below: