What the 2026 Agentic Coding Trends Report Means for Cybersecurity

(And How to Prepare)

I read through Anthropic’s Agentic Coding Trends Report for 2026 and it makes one thing very clear: software development is no longer about writing code.

It’s about orchestrating systems that write, test, deploy, and even maintain code on your behalf.

For many, this sounds like a productivity story. Faster releases. Lower costs. More innovation. But if you work in cybersecurity, you should read it very differently. This is not just a shift in how software is built .. it is a shift in what you are actually securing. And most organisations are not prepared for that change.

From Code to Autonomous Systems

For decades, cybersecurity has focused on protecting relatively well-defined assets: applications, infrastructure, identities, and data. Even as cloud and DevOps introduced complexity, the underlying model remained stable. Humans built systems, and security was layered around them.

Agentic coding disrupts that model completely.

We are now moving into a world where systems are no longer static. They are continuously evolving, partially autonomous, and increasingly built by non-human actors. According to the report, agents are no longer limited to generating snippets of code or assisting with isolated tasks. They are building complete systems over extended periods, coordinating with other agents, and operating with minimal human intervention.

From a security perspective, this fundamentally changes the nature of the problem.

You are no longer securing an application that was designed and deployed at a specific point in time. You are securing a system that is constantly being created, modified, and extended by autonomous components.

That shift alone should be enough to make most security leaders pause.

But there’s an important nuance the report highlights: while engineers use AI in roughly 60% of their work, they report being able to “fully delegate” only 0–20% of tasks. This means the remaining 80–00% still involves active human collaboration with AI .. setting up prompts, supervising output, and applying judgment. For security professionals, this collaborative reality is actually an opportunity.

It means there are still natural checkpoints in the workflow where security oversight can be embedded, provided you design for them now before full delegation becomes the norm.

A New Kind of Attack Surface

As these systems evolve, so does the attack surface .. and not in incremental ways.

One of the most significant changes is the introduction of the “prompt layer.” Instructions given to agents effectively shape their behaviour, which means that manipulating those instructions becomes a viable attack vector. This is not traditional input validation; it is behavioural manipulation at the system level.

At the same time, agents are deeply integrated into tools and services. They call APIs, access cloud resources, interact with databases, and trigger workflows. Each of these interactions creates a new pathway that can be exploited if not properly secured.

There is also the question of memory and state. Long-running agents maintain context over time, which allows them to handle complex tasks .. but also creates opportunities for subtle, persistent manipulation. The report notes that agents now “plan, iterate, and refine across dozens of work sessions, adapting to discoveries, recovering from failures, and maintaining coherent state throughout complex projects.”

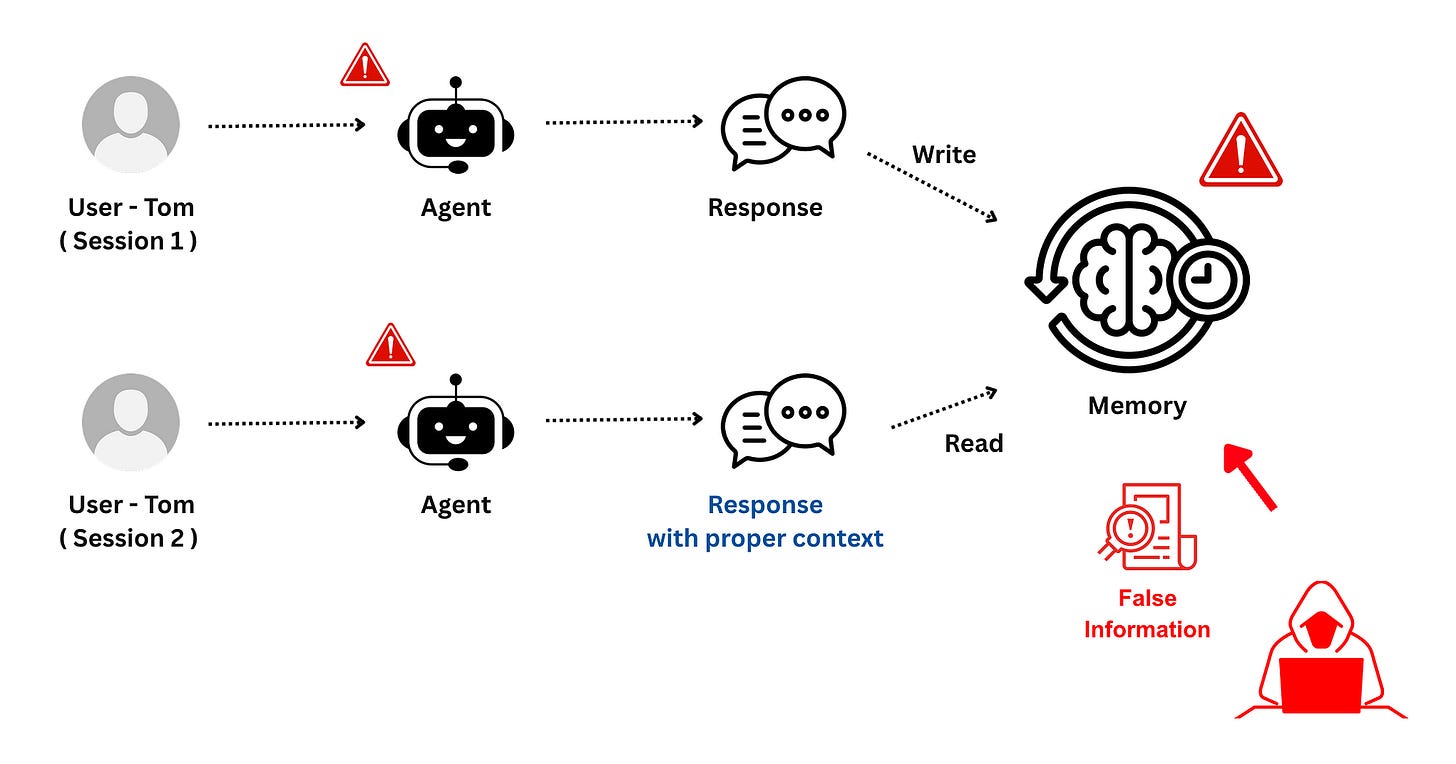

Memory Poisoning an Agent Session

If an attacker can influence that memory, they can gradually steer the system in unintended directions over the course of hours or even days of autonomous operation. This is a fundamentally different threat model from one-shot code generation .. it demands continuous monitoring, not just point-in-time review.

Multi-Agent Coordination Introduces Lateral Movement Risks

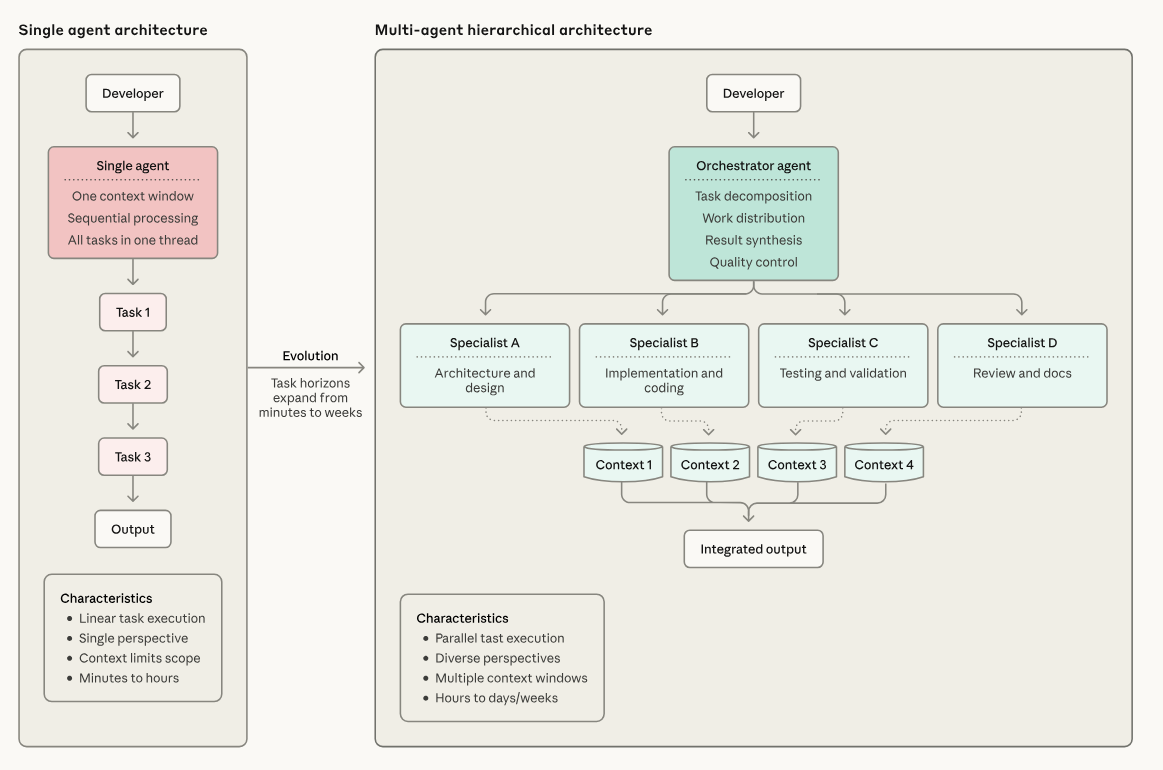

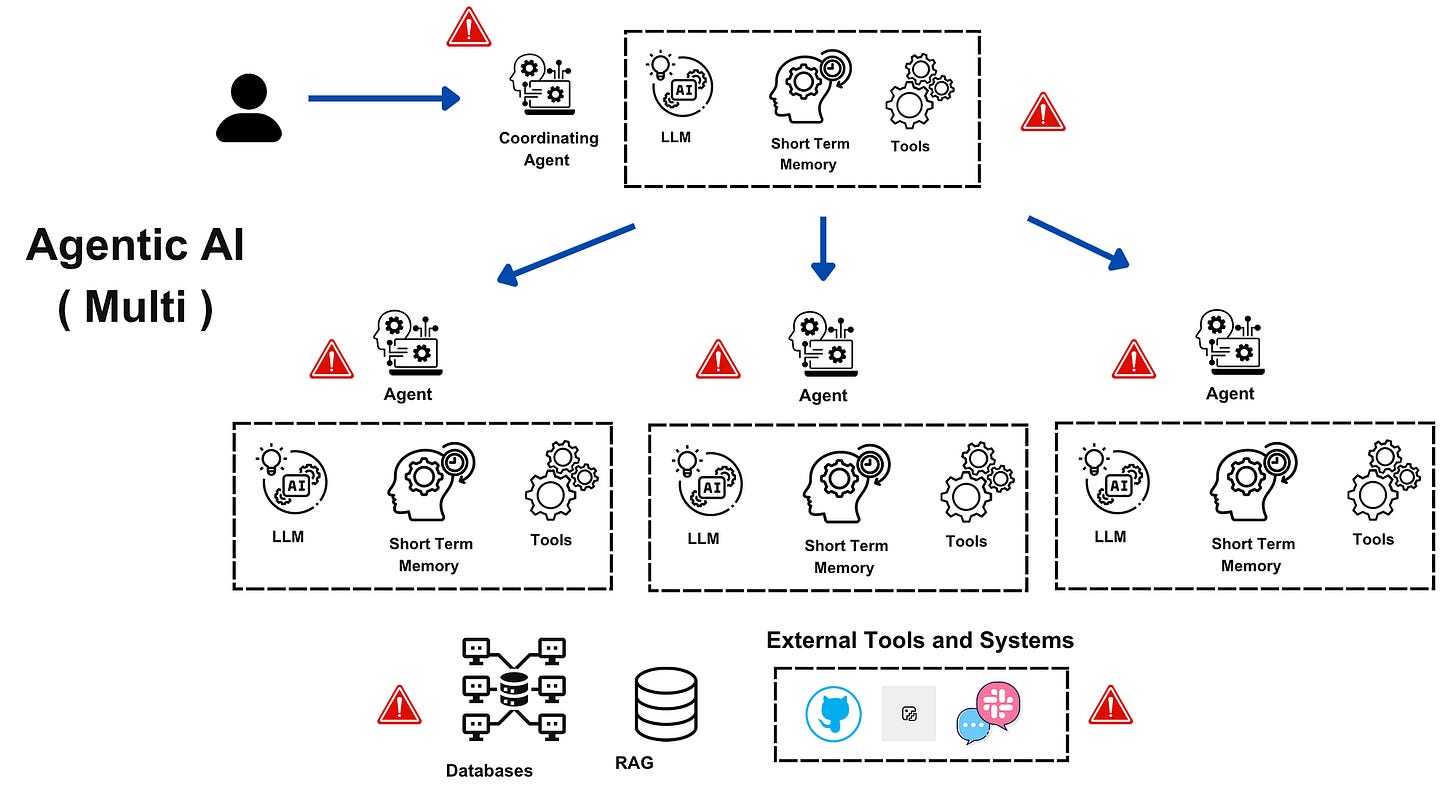

Perhaps most concerning is the rise of multi-agent systems. The report describes a shift from single-agent workflows to orchestrated architectures where a central agent coordinates specialised sub-agents working in parallel, each with their own dedicated context window.

When multiple agents interact, collaborate, and depend on each other, traditional trust boundaries start to blur.

https://resources.anthropic.com/hubfs/2026%20Agentic%20Coding%20Trends%20Report.pdf

A compromised orchestrator agent is analogous to a compromised domain controller .. it can influence every downstream agent’s behaviour. A poisoned sub-agent can introduce subtle defects that propagate through the system before anyone notices. This creates cascading failure modes that are difficult to detect and even harder to contain.

This demands that security teams apply zero-trust principles to agent-to-agent communication. Each agent should operate with minimal necessary permissions, and inter-agent instructions should be validated rather than implicitly trusted. Organisations need to start developing threat models specifically for multi-agent architectures now, before these systems become widespread.

Speed Is Now a Security Problem

One of the more subtle insights in the report is that productivity gains are not just about doing things faster. They are about doing more.

Engineers are not simply completing tasks in less time .. they are producing significantly more output. More features, more fixes, more experiments. The report reveals that about 27% of AI-assisted work consists of tasks that wouldn’t have been done otherwise: scaling projects, building nice-to-have tools, and exploratory work that wouldn’t be cost-effective if done manually.

Engineers are fixing more “papercuts” .. minor issues typically deprioritised .. because AI makes addressing them feasible. Tasks that once required weeks can now be completed in hours.

While this is a major advantage from a business perspective, it introduces a serious challenge for security teams. More output inevitably means more potential vulnerabilities, more dependencies, and more integration points.

The result is a growing gap between how fast systems are being built and how fast they can be secured.

If security remains slow and manual, it will quickly become irrelevant in environments where development happens at machine speed. Security scanning, policy enforcement, and guardrails need to run inline with agent workflows — not as a separate gate that teams route around when it slows them down. The report’s discussion of “agentic cyber defence systems” responding at machine speed isn’t aspirational .. it’s becoming a necessity to match the velocity of both your own organisation and potential attackers.

The Dual-Use Reality

The report acknowledges an uncomfortable truth: the same capabilities that benefit defenders will also benefit attackers.

As agents become more capable, they lower the barrier to entry for offensive activities. Tasks that once required specialised skills — such as exploit development or large-scale reconnaissance .. can now be partially automated. This allows attackers to operate faster, experiment more, and scale their efforts in ways that were previously impractical.

But the report also offers an important insight about how the defensive advantage can be maintained. It describes “three multipliers” driving acceleration: agent capabilities, orchestration improvements, and better use of human experience.

These compound to create step-function improvements rather than linear gains. Organisations that invest in all three .. not just better tools, but better coordination of those tools with human expertise .. create a defensive advantage that is difficult for attackers to replicate, because attackers typically lack the institutional knowledge and oversight infrastructure that makes these multipliers compound.

This creates a situation where both sides are improving at the same time, but not necessarily at the same pace. The gap will widen in favour of organisations that treat agentic security as a systemic capability rather than a tool purchase.

Rethinking the Role of Cybersecurity

All of this points to a deeper shift in the role of cybersecurity professionals.

The focus is no longer just on protecting systems that humans build. It is on securing systems that build and operate themselves.

This requires a different way of thinking, one that emphasises architecture, control design, and system behaviour over isolated vulnerabilities.

The report describes the broader engineering role transformation as a shift “from implementer to orchestrator.” The same evolution applies to security. Your value increasingly lies in designing secure-by-default environments for autonomous systems .. writing the “constitution” that agents and developers operate within .. rather than manually reviewing every pull request or responding to incidents after the fact.

Understanding how agents make decisions, how they interact with tools, and where trust boundaries exist becomes essential. So does the ability to anticipate how failures might propagate across interconnected, multi-agent systems. Security professionals who master this architectural thinking will be positioned to influence how these systems are built, not just how they’re patched after something goes wrong.

Preparing for the Future

Adapting to this new reality does not require abandoning existing security principles, but it does require applying them in new ways.

Security needs to be embedded earlier, not just in the development lifecycle but in the design of the agents themselves. This includes how they are instructed, what tools they can access, and how their actions are constrained. Controls must be built into the system, not layered on afterward.

Automation will also play a critical role. As systems move faster, security must keep pace by becoming more programmatic. Policies, guardrails, and monitoring capabilities need to be defined in code, enabling consistent enforcement at scale.

Identity is another area that will become increasingly important. Agents, in many ways, function like new types of users. They access resources, perform actions, and make decisions. Treating them as first-class identities.. with appropriate controls, least-privilege permissions, and continuous monitoring .. will be key to maintaining security. This applies doubly in multi-agent environments where each agent needs its own scoped identity rather than inheriting broad permissions from an orchestrator.

Finally, there is a need to rethink oversight. The report’s research shows that effective AI collaboration requires active human participation, not passive review. Humans will remain an essential part of the process, but their role will shift toward focusing on high-impact decisions and exceptions. The goal is not to review everything, but to build intelligent systems that handle routine verification while escalating genuinely novel situations, boundary cases, and strategic decisions for human input.

Final Thought

The 2026 Agentic Coding Trends Report is not just describing a new set of tools. It is describing a new model for how systems are built and operated.

In that model, change is constant, autonomy is increasing, and scale is no longer limited by human effort. Multi-agent systems are introducing coordination complexity we haven’t had to secure before. Non-technical teams are building production workflows without security training. And the speed of development is outpacing every manual review process we’ve relied on.

Cybersecurity cannot afford to approach this world with assumptions rooted in the past. Because in this new environment, you are no longer securing code that someone wrote.

You are securing systems that are constantly writing themselves.

And the sooner you start thinking that way, the better prepared you will be for what comes next.

The 0-20% full delegation rate is the statistic that cuts through the hype most effectively. Engineers doing AI-assisted work are still actively collaborating on 80% of tasks, which means autonomy is far from replacing professional judgment in complex work. The 27% figure for previously unfeasible tasks is equally important but gets less attention. That's where the real leverage sits: enabling work that couldn't have been attempted at all, not replacing existing work.

The orchestrator-as-domain-controller analogy is sharp. A compromised orchestrator in a multi-agent system propagates that compromise downstream in exactly the way an AD controller breach does. Security teams need to be thinking about agent privilege hierarchies the same way they think about service account privilege escalation. Most aren't yet, and that gap will be expensive for early enterprise adopters.

Lateral movement risk in multi-agent architectures is still underspecified in the security literature.