DEVIN Is Just The Start — Why Cybersecurity Professionals Should be VERY Worried

Are “AI Developers” the next big compromise in the Software Supply Chain?

“The End of Software Development As A Career””

Unless you have not been following the news .. you would heard about “AI Developers” recently

Earlier this month, Cognition Labs proudly told informed us about their Autonomous AI agent “Devin”:

“Today we’re excited to introduce Devin, the first AI software engineer. Devin is the new state-of-the-art on the SWE-Bench coding benchmark, has successfully passed practical engineering interviews from leading AI companies, and has even completed real jobs on Upwork.

Devin is an autonomous agent that solves engineering tasks through the use of its own shell, code editor, and web browser.”

Oh boy.

Software Developers already nervous about AI-generated code taking away their jobs .. now have fully automated AI powered Software Engineers to worry about !

The company has already shown the tool in action, which was quite impressive, to say the least.

It is able to resolve errors, debug code, and perform a variety of other tasks!

Although I am very skeptical that an AI will fully take over the role of a software developer .. the massive investments that are being made in this space are something that cannot be ignored

I am not a software developer, but I am deeply worried about Devin AI and not because I think it will take away my job.

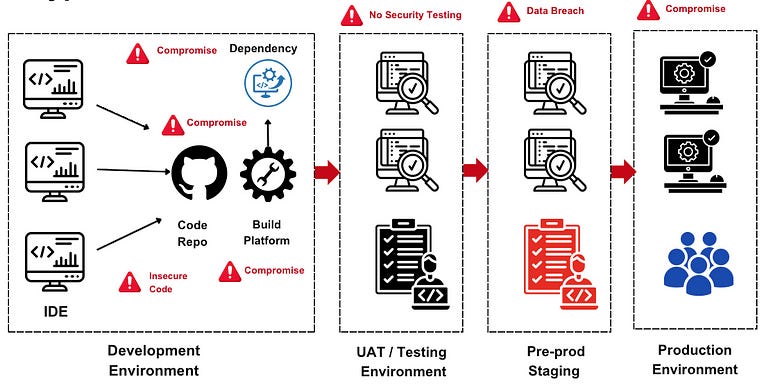

How This Might Impact The Software Supply Chain

If you have worked in Cybersecurity for the past couple of years, you have undoubtedly heard of Software Supply Chain Security.

Modern software is rarely built from scratch but instead is built together from various components, libraries, and dependencies.

Every single one of them can be a potential point of compromise

We have had incidents like the SolarWinds compromise, Log4J, and Codecov, to name just a few

We have mitigated this by raising awareness about insecure code, hardening environments, artifact repositories, package servers, etc.

Now we have AI to add to the list of risks that need to be mitigated.

AI-powered coding was more about assisting developers in doing their jobs and not replacing them entirely hence the risk was not that high.

But this is a different thing entirely!

These AI-based agents will have access to commit code, access code repos and packages, and move code to production environments as AI adoption increases.

Previously, we had risks of software development environments being compromised via phishing or malware and spreading to the rest of the networks.

But what if an attacker can take over these software-powered engineers?

Imagine an attacker being able to remotely control these sorts of agents and leverage its access to move within a network. Or to access sensitive codebases

How exactly do you train an AI software engineer on what links are malicious and what are not?

Not to mention how much data an AI software engineer would need to train on to become good at a particular company’s codebase. Data that could be gold to a potential attacker if compromised.

Do not forget about AI hallucinations that could lead to faulty or insecure code being generated and introduced into production systems.

Without clear standards and frameworks, relying on tools like Devin without human oversight could introduce a slew of risks that the fragile software supply chain is unprepared for!

How To Get Ready

I have mentioned the risks of Autonomous AI before, and this seems to be the next evolution of Generative AI, whether we are ready for it or not

While we are far from mass adoption, and there will always be some level of human oversight present .. there are a few controls that you can start brainstorming about today:

How will these AI agents authenticate and authorize themselves within an environment? Think about introducing additional checks and alerts if sensitive codebases are being accessed.

Subject code from AI developers to the same security checks you would carry out on human developers. Do not rely on the hype and assume insecure code as the default.

Start thinking about alerts around these AI agents. Security events include an AI agent attempting to access sensitive code, anomalous behavior, data leakage, etc. This can help detect and respond to security incidents in real time, reducing the impact of potential breaches.

Update your Vendor and Third-Party Risk Management programs. Establish clear contractual agreements and service level agreements (SLAs) to hold vendors accountable for maintaining the security and integrity of their autonomous AI systems!

I hope this made start thinking about the new threat landscape ahead.

Devin and other AI-powered software are not just about code insecurity but require a fundamental rethink of how we view software supply chain security.

Exciting times ahead !